top of page

One Command, Done: Integrating Air API with a ClawHub Plugin

Hi,I’m CY Lee from the DevOps/SRE team. With the launch of Air API, we’ve been building out our internal infrastructure monitoring system. Along the way, we developed an OpenClaw plugin—and in this post, I’d like to walk you through what we built and why it matters. 🙂 Before We Start If you’ve used OpenClaw for a while, you’ve probably experienced something like this at least once. The moment you try to connect an external model provider, you find yourself going through the

Apr 16

How Many Tokens Per Month Before Self-Hosting Your GPU Becomes Cheaper?

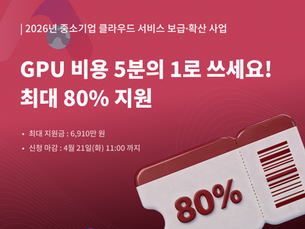

If you've been running an AI service for any length of time, you've probably hit this question at some point. "Is using an API actually the cheaper option? Or would it be better to just buy a GPU and run it ourselves?" As model performance converges, cost has become the decisive battleground. Teams at every scale are starting to run the numbers on which approach is actually cheaper for their usage volume — and the answer changes significantly depending on how much you're act

Apr 14

Google's TurboQuant — The Era of Serving LLMs Without Expensive GPUs Is Getting Closer

Google’s TurboQuant reduces KV cache memory usage in LLM inference without sacrificing accuracy. Learn why 80GB GPUs were needed—and why mid-range GPUs may now be enough.

Mar 30

bottom of page